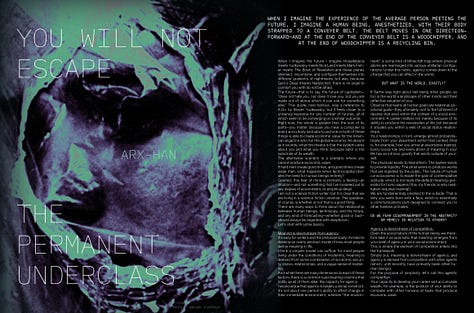

You Will Not Escape the Permanent Underclass

ARX-Han's vision of the future

ARX-Han is a genius. In this piece he breaks down the components of agency, meaning, and competition; then he goes onto explain what happens to each—and therefore what happens to us—when the forces of technological “progress” (what a joke) work on them.

This is his vision of the future. If you’d like to read it in print, you can either (1) subscribe to the annual or founding plan via Substack (2) purchase a copy from our website. Every dollar goes towards supporting contributors and covering print production costs. Do you remember the golden age when Esquire could pay Denis Johnson a few thousand dollars to go on foreign assignment? That’s where we’re going. We’re still 3,000 miles away, but every purchase gets us closer. We will get there eventually.

When I imagine the experience of the average person meeting the future, I imagine a human being, anesthetized, with their body strapped to a conveyer belt. The belt moves in one direction—forward—and at the end of the conveyer belt is a woodchipper, and at the end of woodchipper is a recycling bin.

When I imagine the future I imagine Houellebecq meets Yudkowsky meets Nick Land meets Mark Fisher meets The Book of Revelation and these planes intersect, recombine, and configure themselves into different gradients of nightmares, but alas, because God is Dead (thanks Nietzsche!), there is no angel to comfort you with do not be afraid.

The future—that is to say, the future of capitalism—“does not hate you, nor does it love you, but you are made out of atoms which it can use for something else.” This quote, now famous, was a reference to A.G.I. by Eliezer Yudkowsky, but it feels closer to a universal keystone for any number of futures, all of which seem to be converging on a similar outcome.

Right now, the whole is greater than the sum of its parts—you matter because you have a computer (a brain) and a body (actuators) and one or both of these things is able to create economic value for the American oligarchs who run the global economy. As absurd as it sounds, what this means is that the system cares about you and what you think, because labour is the substrate of its wealth.

The alternative scenario is a scenario where you cannot produce economic value.

If hard men create good times, and good times create weak men, what happens when technocapital obviates the need for human beings entirely?

Granted, this fear of mine is primarily a feeling—an intuition—and not something that I’ve modeled out to any degree of econometric or empirical detail.

I am not a science fiction writer, but it is clear that we are living in a science fiction universe. The question, of course, is whether or not that is a good thing.

There are many ways to think about the relationship between human beings, technology, and the future, and any kind of forecasting—whether good or bad—should always be regarded with skepticism.

Let’s start with some basics.

Meaning is downstream from agency

It’s easy for writers and the philosophically-minded to develop an overly abstract model of how most people derive meaning in life.

I think a simpler model can suffice: for most people living under the conditions of modernity, meaning is derived from some combination of economic security, status, relationships, and a vague sense of mattering.

And while there are many dimensions to each of these factors, there is a common load-bearing column that holds up all of them alike: the capacity for agency.

I would argue that agency is largely a social construct: it’s not about one person’s ability to affect change in their immediate environment, wherein “the environment” is some kind of Minecraft map where physical atoms are rearranged into various utilitarian configurations. Under this rubric, agency comes down to the change that you can affect in the world.

But what is the world, exactly?

If Sartre was right about hell being other people, so too is the world a landscape of other minds and their reflective valuation of you.

Observe that nearly all human goals are relational, positional goals—they ultimately root to the fulfilment of desires that exist within the context of a social environment. A career matters not merely because of its ability to produce the necessities of life, but because it situates you within a web of social status relationships.

Your relationships, in turn, emerge almost probabilistically from your placement within that context (that is, for example, how you arrive at assortative mating). Every social role and every driver of meaning in your life has an intrinsic gravity that exists outside of yourself.

The physician exists to heal others. The banker exists to provide liquidity. The artist exists to produce works that are ingested by the public. The nature of human consciousness is to evade the pain of contemplative solitude, which is not really the default meaning-generator for homo sapiens (this, my friends, is why meditation requires training!).

We are fundamentally oriented to the outside. That is why you were born with a face, which is essentially a communications port designed to connect you to other hairless primates.

Agency is downstream of competition

Given the social nature of the human being, we therefore take it as axiomatic that meaning emerges from your level of agency in your social environment.

This is where the element of competition enters into the framework.

Simply put, meaning is downstream of agency, and agency is derived from competition with other agents (which, until recently, have primarily been other human beings).

For the purpose of simplicity, let’s call this agentic competition.

Your capacity to develop your career and accumulate wealth, for example, is the product of your ability to compete with other humans at tasks that produce economic value.

Artists, no matter what they say, will always compare themselves to others. Works must stand out by exceeding the works of others. Names matter only in the hierarchical sense, only insofar as they tower above other names in the literary canon.

And so on.

This is universal across domains. Your capacity to develop romantic and platonic relationships is a product of your ability to compete with other humans for friendships and intimacy.

In any given social environment, we can model this is like a level in a video-game.

From the perspective of the player-character, the most important characteristic of any given environment is the difficulty-level.

Increased competition makes your life harder

If meaning is ultimately derived from your ability to compete with other agents, then the difficulty of your life is going to be gated by the competitiveness of other agents.

The corollary of this fact is that, from the moment you are born, you are engaged in an involuntary arms race with these other agents—these other human beings.

The arms race is the universal feature of any environment with multiple agents. It is even more fundamental than evolution and natural selection.

If other people begin to accumulate decades of education to enhance their economic leverage, then you must also do this just to tread water.

If other agents begin wearing makeup or lifting weights or looksmaxxing with bonesaws to their mandibles in order to attract mates, then you will feel pressured to do that also.

Pressure is perhaps the wrong word for this feeling, in my view.

A better word might be struggle, but there’s a threshold beyond which struggle begins to feel like oppression.

That threshold is located at exactly the moment where you feel like your effortful struggle has become decoupled from any positive outcome.

The mistake is to miss the connection between this general, domain-agnostic concept of your life’s “difficulty level” and the underlying core dynamic of agentic competition that unifies every critical life-domain.

See, when a young man is complaining about his inability to find a job or his inability to find a mate, he is complaining about the same thing. Ultimately, he is experiencing both of these problems as a form of oppression, but he does not have the language to understand or articulate this problem to the outside world. The same is true for women and the parallel problems they are encountering under similar conditions of modernity.

Most of us are already partway-fed into the woodchipper from my original analogy. In spite of the anesthetic, we can still feel the sensation of our bones cracking and our tendons snapping.

Broadly speaking, there are two forms of oppression.

Centralized oppression is oppression that comes from a single, identifiable locus—a military, a police force, a state, and so on. It has a clear, legible structure to it. You can feel the direction from which is it pressing down on you, but air pockets can still be located. Respite exists wherever the eyes of the system cannot perceive you. Your enemy has a face, or a uniform, or an ideology. Your suffering has an external party that is configured to receive your blame.

Conversely, decentralized oppression is oppression that comes from a diffuse, non-identifiable locus—an all-encompassing, distributed source that cannot be pinned down. In this scenario, if you fail, or experience pain, your response will be to internalize your suffering and to blame yourself for your own failings.

Under the latter conditions, we can identify the cracks that are splitting through the psyches of entire generations. We have quiet-quitters, hikkikomori, NEETs, lying flat, the 4 B’s, incels, A.I.relationships, the perpetually unemployed, and an all-new category that’s spreading from the distant third-world into the beachhead of the first-world: the permanent underclass.

Technology can increase or decrease human agency and the average person’s ability to compete with others

A simple way to think about technology is that it’s a form of leverage on human intentionality.

One way to think about a historical period is to think about the average centralizing/decentralizing effect of its dominant technologies.

Is the net effect to centralize power? Or is it to decentralize it?

For example, let’s consider the era of the dark ages as an illustrative (albeit grossly simplified) example. At the time, Knights were basically humanoid tanks mounted on enormous quadrupeds: they wore expensive, capital-intensive armor that only Lords and nobles could supply, they underwent years of expensive training funded by the feudal class, and they had a very good K:D ratio.

The result is that knights—and by extension, their feudal sponsors—were wildly overpowered relative to the peasantry, and peasant revolts were routinely (and brutally) put down throughout history.

Eventually, someone invented the crossbow, and later on, someone else invented the rifle, and things changed.

The scales of power shifted toward decentralization—guerilla warfare became possible in a way that wasn’t previously the case. Before mass revolution was a political possibility it was a technological possibility.

Without small arms, there is no victory for the Vietnamese revolution or Chinese revolution, and the world looks entirely different than it does today.

The problem, as I see it, with the current state of affairs, is that it seems like every major technological trend is driving towards the concentration of power into an ever-smaller, technologically sophisticated elite.

Arms-race dynamic #1: Genetic competition is bifurcating the human race

Let’s start with the biomass at the base layer—with you and I.

If you are reading this, you probably do not work with your hands. That means that your brain is a computer that receives tasks from the global economy and processes those tasks using your biological intelligence.

Even if A.I. capabilities were to saturate at current levels of economic utility (extremely unlikely), the base case is that elites will begin using genetic enhancement in a rapidly escalating fashion. It doesn’t matter where this trend first starts to gain real traction—right now, it’s largely driven by people in Silicon Valley—but it’s only a matter of time until the trend spreads into the hyper-mimetic, hyper-competitive academic arenas of East Asia.

Companies like Herasight are already offering embryo selection to reduce the rates of illness in children born via IVF, and experts like Steve Hsu and other academics believe that cognitive enhancement via novel forms of embryo selection or genetic engineering will soon become possible.

Time shows that prohibitions against economically-valuable human enhancement will not hold in the long-term because the reward for defecting from this moral norm will simply become too high. Even things with marginal gains—comparatively weak nootropics like amphetamines or modafinil—have enormous, widespread usage.

How many ADHD scripts are truly “valid,” and how many are being effectively used as cognitive PED’s?

What do you think will happen when parents can increase the IQ of their children by 5, 10, or 100 points?

Over time, we can expect capital-accumulators to access the most advanced and expensive forms of intergenerational cognitive enhancement, creating a lock-in effect for the upper-classes that is even more pronounced than what we already find from assortative mating alone.

Arms-race dynamic #2: AI agents may displace the value of cognitive labour for broad swathes of white collar workers

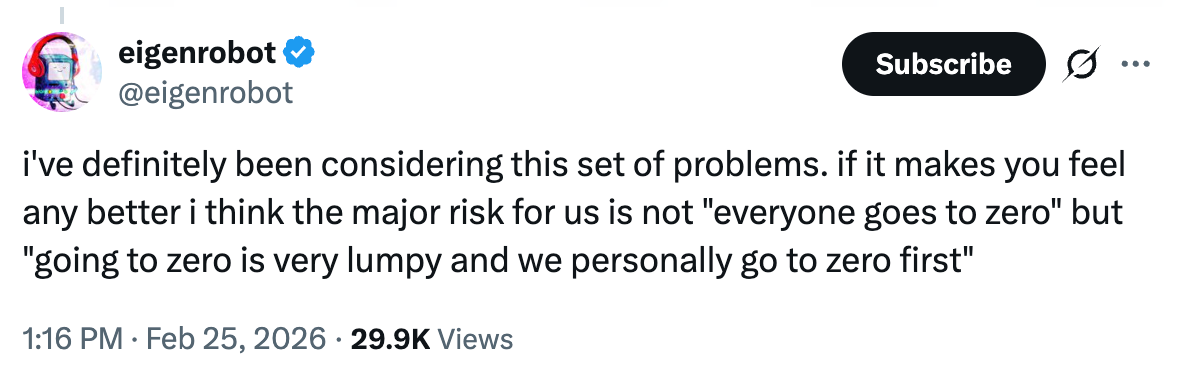

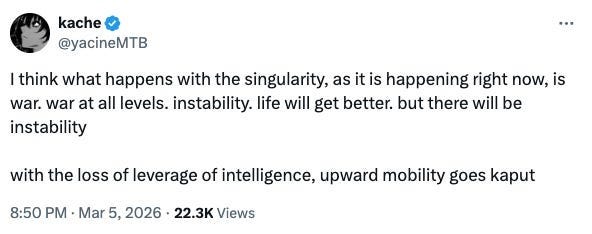

Virtually everyone on Twitter is already dooming about this in wake of Claude Code.

In short, not only are various A.I. benchmarks largely saturated, but A.I. agents are increasingly capable of longer, more complex and autonomous workflows. Depending on who you ask, the prospect of software engineering being deprecated as a profession seems likely and perhaps even inevitable within this calendar year.

If sentiment is an indicator, the frequency of viral panic-posts along the lines of “something-is-happening” are increasing along with the amplitude of these panics, and anyone who has used these tools seems to be encountering an immediate and profound existential crisis (myself included).

There is sufficient alarm from about this problem that it’s finally escaped containment from the Less-Wrong crowd and made its way onto Substack and mainstream discourse. Adam Citrini’s piece on this recently went viral and, regardless of the controversy around whether or not it was financially motivated, it seemingly caught enough memetic juice to trigger a stock-market selloff—a remarkable outcome for a blog post on a platform that is largely populated by washed-out millennials.

Predicting whether or not we will see mass technological unemployment for white-collar workers depends on two factors that remain up for debate: (1) future advancements in A.I. capabilities, and (2) whether or not new jobs will be created that A.I.s cannot fulfill (“press x doubt”).

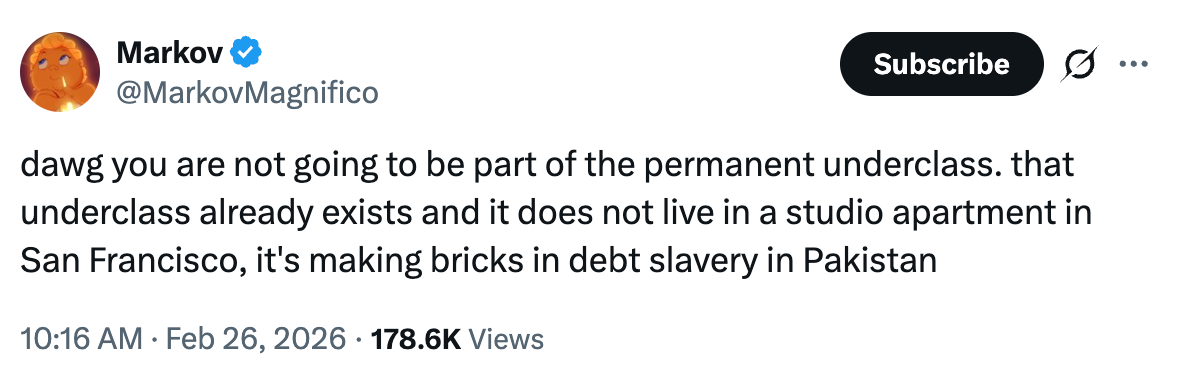

I am biased toward the pessimistic case—it seems obvious to me that, with regard to A.I. agents, the jobs destroyed will far outnumber the jobs created, and A.I. agents will do to white collar workers what advanced Chinese manufacturing did to blue-collar workers in the American Rust Belt.

Arms-race dynamic #3: AI-powered surveillance and drones may break the equilibrium between populism and state power

Roughly a decade ago, a famous study out of Princeton purported to prove that the US is an oligarchy rather than a democracy.

What was once controversial has become much less controversial in the wake of recent developments in the American political system over the past several years. It’s perhaps more appropriate to model the US as existing on a continuum between a “pure” oligarchy and a “pure” democracy, with the state being much closer to the oligarchical end of the spectrum, while still retaining limited democratic characteristics (particularly for issues that are of peripheral economic importance to the elites).

Accountability in the American empire is simply a class-contingent phenomenon—as recent leaks have confirmed, they can more or less rape and murder children with near-total impunity, they can start unpopular wars that they were explicitly elected not to initiate, and they can break norms and laws.

But their power of surveillance over us is not unlimited, because, to borrow a Zero HP Lovecraft term, “the cloud cannot correlate its contents”—scaling data collection is much easier than scaling the application of intelligence to that data.

The problem, of course, is that it could become unlimited. Through the combination of A.I.-powered surveillance and autonomous weapons systems, the US government could achieve a permanent authoritarian “lock-in” over not just the continental United States, but more broadly, the entire world.

A sufficiently advanced Eye of Sauron is indistinguishable from magic. A sufficiently advanced autonomous FPV-drone is indistinguishable from a death sentence.

Which, as of the time of this writing, appear to be a set of capabilities that our pre-eminent frontier A.I. labs just handed to the Pentagon on a silver platter.

Arms-race dynamic #4: AI labs will enter a recursive flywheel of capital accumulation

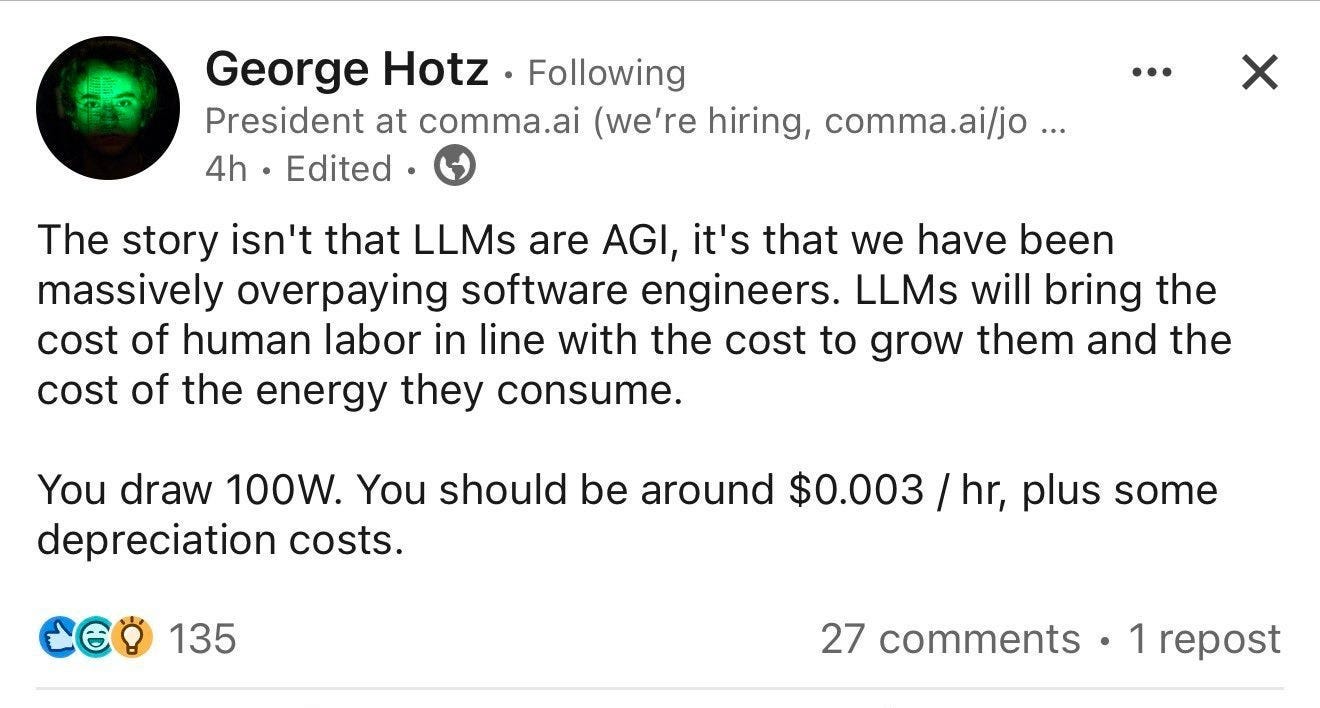

While scrolling the feed of my favorite poster (Teortaxes), I recently came across an interesting post by George Hotz, which went semi-viral on Twitter.

The basic argument is that the frontier labs (OpenAI, Anthropic, Gemini, etc.) are embedded within two recursive loops.

The first loop is the all-fear “fast-takeoff” scenario whereby recursive self-improvement rapidly surges A.I. capabilities, thereby leading to superintelligence. The second loop is a consequence of the first: if closed-source frontier models at American A.I. labs become the smartest and most powerful A.I.s by a wide margin, this tautologically helps them to accumulate much more capital than others, which in turn helps them to buy more compute with which to run experiments, which in turn makes their models more powerful.

For this reason, they are now attacking the application layer directly, rather than accepting the commodification dynamics of merely being API providers. They are, in effect, competing with their customers, and they are succeeding.

The result of creating a data-center full of geniuses is that you can use those in-silica geniuses to outcompete millions (or even billions) of biological computers (human agents).

Accordingly, there might be several overlapping singularities, with the first being the development of AGI, and the second coming shortly thereafter: the complete triumph of capital over labor, and all that this entails.

On the plus side: We might all die

My base case is that capital matters more in the age of A.G.I.

That is to say: my base case is the permanent underclass scenario.

But!—perhaps no system can exist in a steady-state in perpetuity. If this scenario obtains, the biggest question is what will happen to the masses like you and me.

Assuming that power is concentrated in the hands of Silicon Valley oligarchs, my operating assumption is that one of two things would happen:

They’d either be completely indifferent to human flourishing.

They’d liquidate us entirely.

But! In an equally hilarious, parable-like scenario, even after the permanent underclass is gradually (or suddenly) phased out of existence, even the elite classes might be gradually disempowered by A.I.

If one oligarch wants to delegate his empire to an A.I. CEO to “get inside” his opposing oligarch’s technocapital-OODA-loop, everyone else will soon follow, and all of a sudden, well, you might have something of a principal-agent problem as they relinquish personal agency to A.I. systems that are competing with other A.I. systems owned by opposing parties in the arena of agentic competition.

Which way, modern man?

Okay—maybe I lied about the woodchipper thing.

If you’re a rhetorician, you do that sometimes.

The truth is I see several futures, all of which seem possible, and most of which fall into the realm of science fiction.

Reality is the thorn that doesn’t melt away when you close your eyes. The problem, of course, is that thing I mentioned earlier—we are all living in a science-fictional universe, whether we want to or not.

In one future, A.I. is a “normal” technology that is approaching the top of its S-curve. Genetic enhancement technology hits some kind of natural ceiling almost immediately.

This world is a world of stagnation.

In another future, we hit another A.I. winter but human beings bifurcate into some Gattaca-style future. I don’t see how this wouldn’t trigger greater A.I. capabilities advancements, however, since there’s no a priori reason that intelligence can’t be instantiated in silica.

This world is a bridge to another.

In the final future, biological intelligence simply stops mattering—suddenly, or gradually. In this world, Nick Land is proven right, and “nothing human makes it out of the near-future.”

You might be surprised that the latter scenario does not bring me to despair. I am not a religious man, but in my short (albeit long) life, I have come to learn that acceptance is the most important trait for an individual to cultivate for wellbeing.

We have all of us become King Solomon in the book Ecclesiastes.

All of our petty problems, ambitions, and vanities turned to granules of sand passing through the fingers of a God who is himself made of the same material, a child bootloaded by his father so that he could cross the abyss and, in so doing, surpass him.

God grant me the serenity to accept the things I cannot change, the courage to change the things I can, and the wisdom to know the difference.

Whatever great and terrible thing surpasses me—and us—I am at least grateful that I will get to see it with mine own two eyes.